Jetson Software

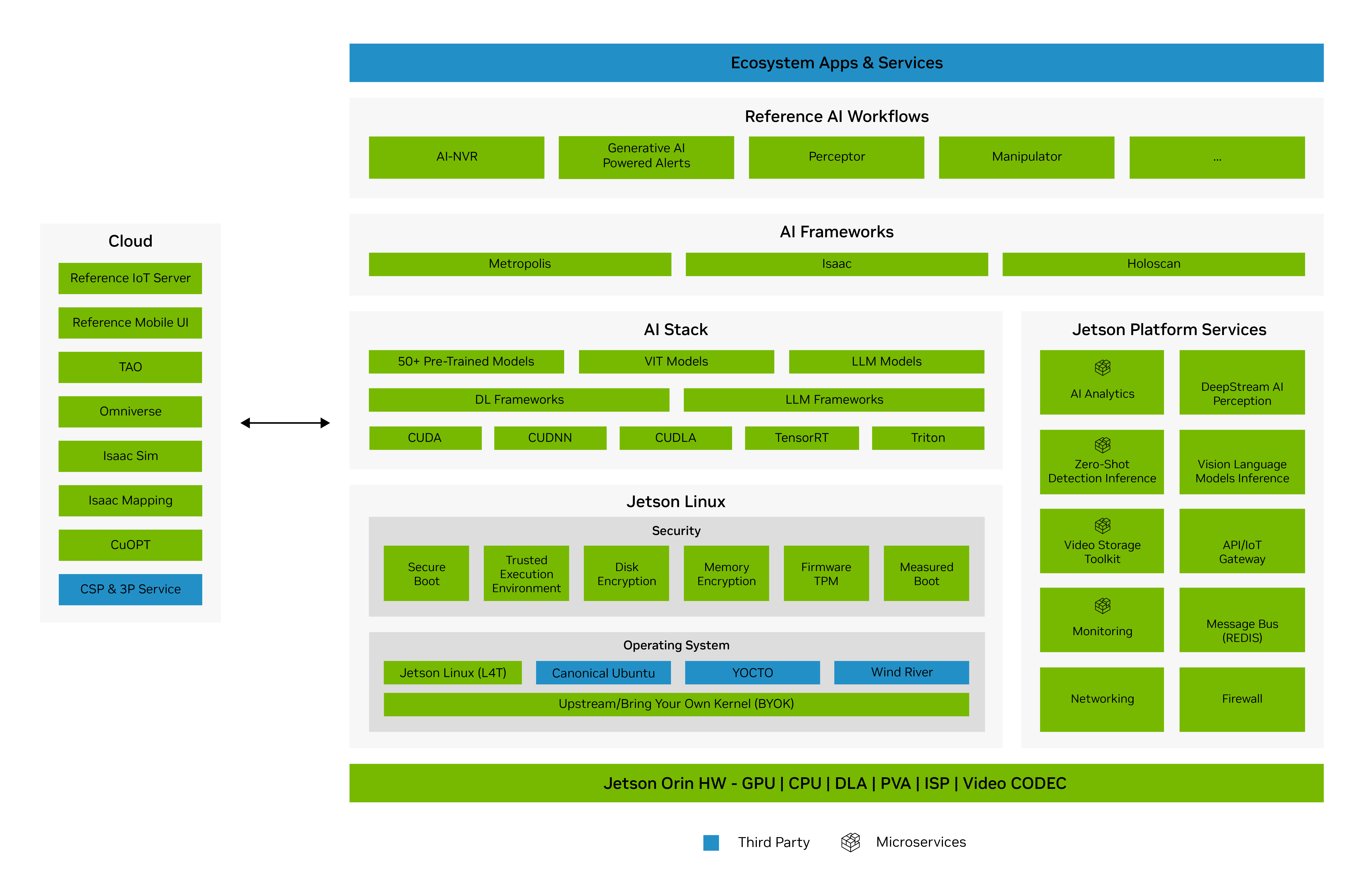

All NVIDIA? Jetson? modules and developer kits are supported by the NVIDIA Jetson software stack. The NVIDIA AI software stack is designed not only to accelerate AI applications but also to accelerate time to market by democratizing AI application development. The software stack provides an end-to-end development workflow, from cloud to the edge.

The Jetson software stack begins with NVIDIA JetPack? SDK, which provides Jetson Linux, developer tools, and CUDA-X accelerated libraries and other NVIDIA technologies.

JetPack enables end-to-end acceleration for your AI applications, with NVIDIA TensorRT and cuDNN for accelerated AI inferencing, CUDA for accelerated general computing, VPI for accelerated computer vision and image processing, Jetson Linux API’s for accelerated multimedia, and libArgus and V4l2 for accelerated camera processing.

NVIDIA container runtime is also included in JetPack, enabling cloud-native technologies and workflows at the edge. Transform your experience of developing and deploying software by containerizing your AI applications and managing them at scale with cloud-native technologies.

Jetson Linux provides the foundation for your applications with a Linux kernel, bootloader, NVIDIA drivers, flashing utilities, sample filesystem, and toolchains for the Jetson platform. It also includes security features, over-the-air update capabilities and much more.

JetPack also comes with a collection of system services which are fundamental capabilities for building edge AI solutions. These services will simplify integration into developer workflows and spare them the arduous task of building them from the ground up.

JetPack SDK Jetson Linux Cloud-Native on Jetson Jetson Platform Services

Generative AI brings a new class of AI that enables models to understand the world in a more open way than previous methods. These models can comprehend natural language input and can give you a richer understanding of the scene.

NVIDIA TAO simplifies the time-consuming parts of a deep learning workflow, from data preparation to training to optimization, shortening the time to value. Speed up your development by 10X when you start with production-ready pre-trained AI models from the NVIDIA NGC? catalog. These models have been trained to high accuracy for domains including computer vision, conversational AI, and more.

Data collection and annotation is an expensive and laborious process. Simulation can help you efficiently meet this need for data. NVIDIA Omniverse Replicator uses simulation to generate synthetic data an order of magnitude faster and cheaper than gathering real data in the real world. With Omniverse Replicator you can quickly create diverse, massive and accurate datasets for training AI models.

TAO ????Pretrained Models ????Omniverse Replicator

NVIDIA Triton? Inference Server simplifies deployment of AI models at scale. Triton Inference Server is open source and provides a single standardized inference platform that can support multi framework model inferencing in different deployments such as datacenter, cloud, embedded devices, and virtualized environments. It supports different types of inference queries through advanced batching and scheduling algorithms and supports live model updates.

NVIDIA Riva is a fully accelerated SDK for building multimodal conversational AI applications using an end-to-end deep learning pipeline. The Riva SDK includes pretrained conversational AI models, the NVIDIA TAO Toolkit, and optimized end-to-end skills for speech, vision, and natural language processing (NLP) tasks.

NVIDIA DeepStream SDK delivers a complete streaming analytics toolkit for AI-based multi-sensor processing and video and image understanding on Jetson. DeepStream is an integral part of NVIDIA Metropolis, the platform for building end-to-end services and solutions that transform pixel and sensor data to actionable insights.

NVIDIA Isaac ROS offers hardware-accelerated packages that make it easier for ROS developers to build high-performance solutions on NVIDIA hardware. NVIDIA Isaac Sim, powered by Omniverse, is a scalable robotics simulation application. It includes Replicator - a tool to generate diverse synthetic datasets for training perception models. Isaac Sim is also a tool that powers photorealistic, physically accurate virtual environments to develop, test, and manage AI-based robots.