NVIDIA Metropolis for Developers

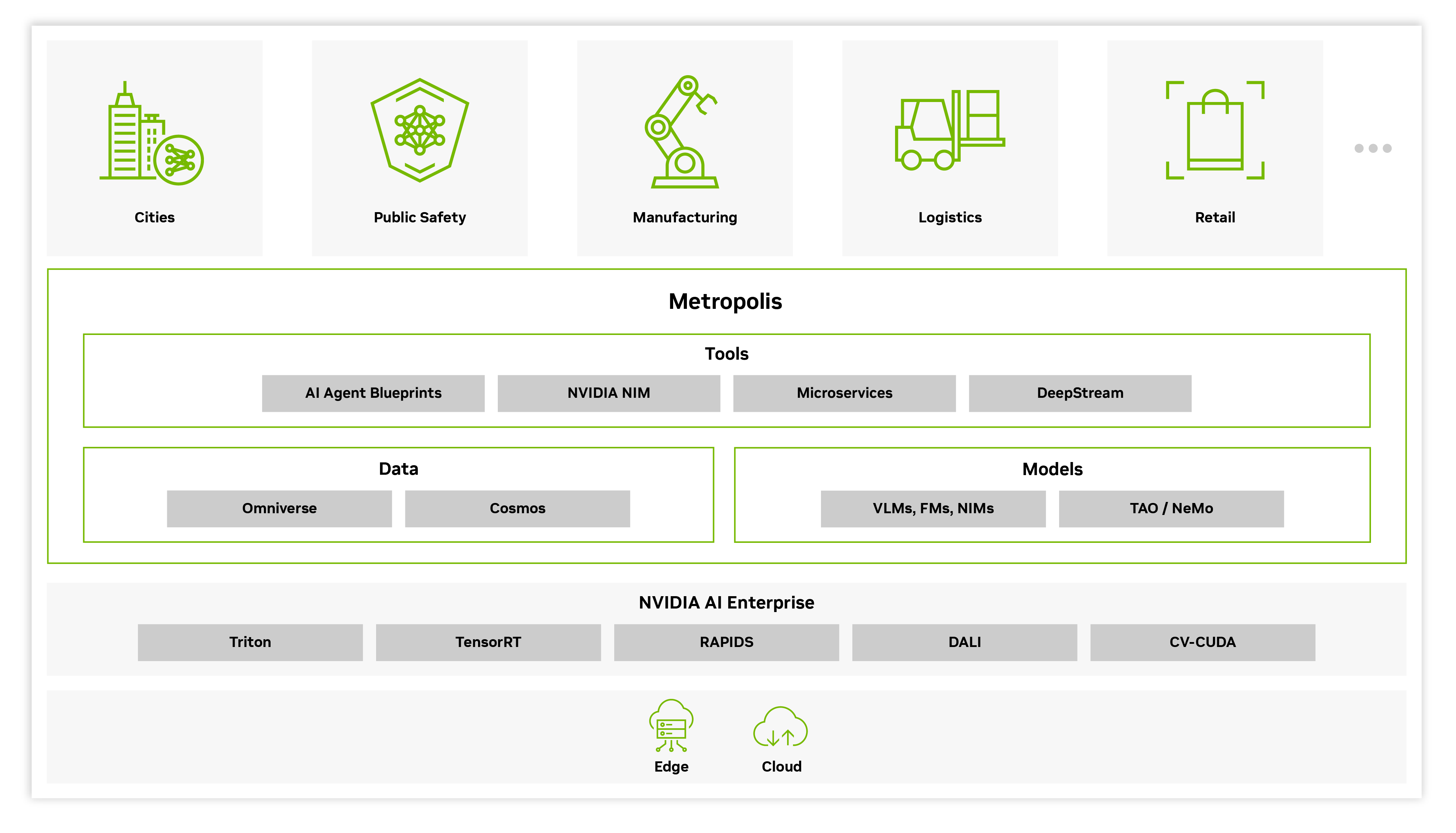

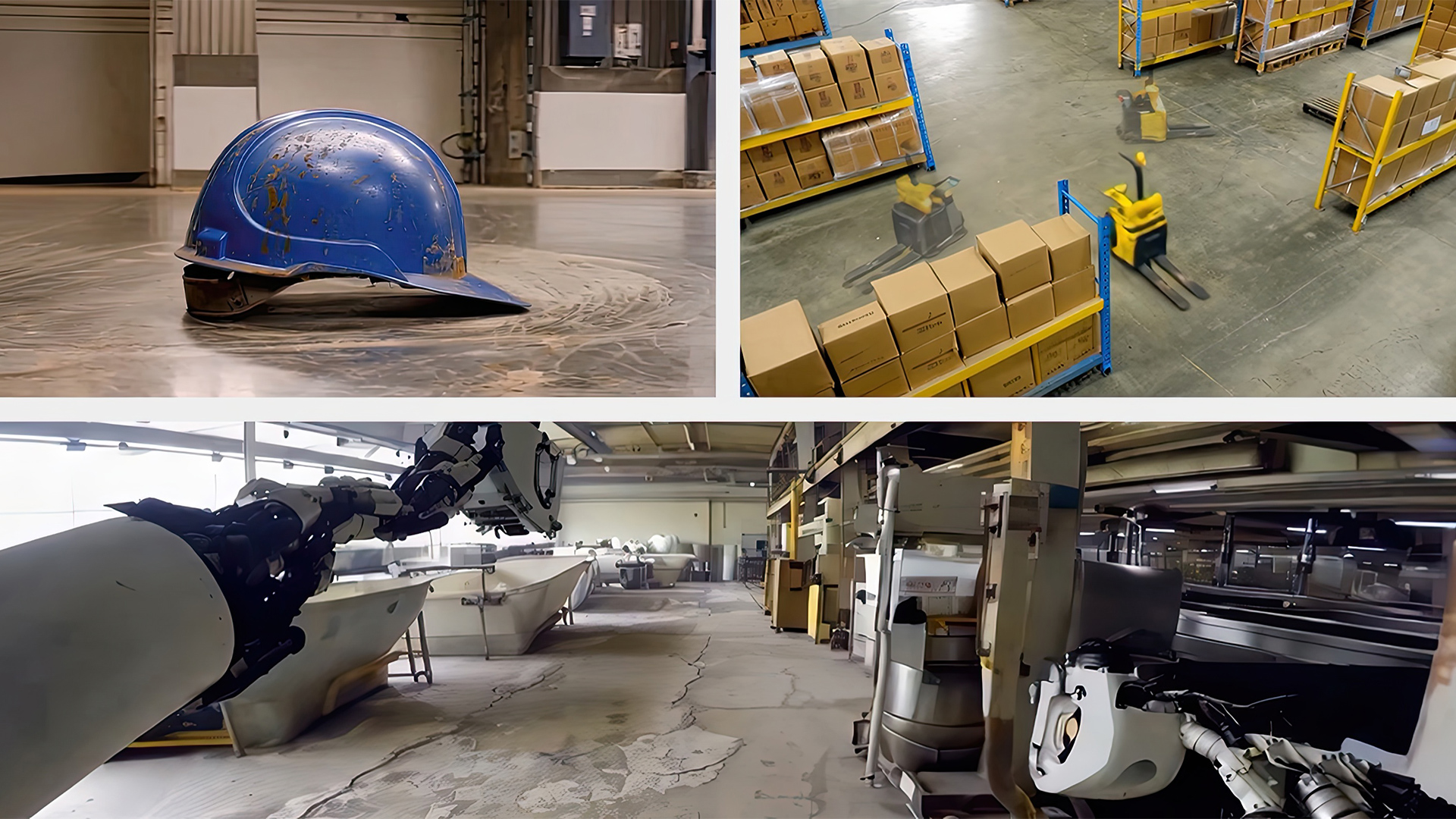

Discover an advanced collection of developer workflows and tools that deliver exceptional scale, throughput, cost-effectiveness, and faster time to production. It provides everything you need to build, deploy, and scale vision AI agents and applications, from the edge to the cloud.

Get Started

Explore All the Benefits

Faster Builds

Use and tune high-performance vision foundation models to streamline AI training for your unique industry. NVIDIA AI Blueprints and cloud-native modular microservices are designed to help you accelerate development.

Lower Cost

Powerful SDKs—including NVIDIA TensorRT?, DeepStream, and TAO Toolkit—reduce overall solution cost. Generate synthetic data, boost accuracy, maximize inference throughput, and optimize hardware usage on NVIDIA platforms and infrastructure.

Flexible Deployments

Deploy with flexibility using NVIDIA Inference Microservices (NIM?), cloud-native Metropolis microservices, and containerized apps offering options for on-premises, cloud, or hybrid deployments.

Powerful Tools for

AI-Enabled Video Analytics

The Metropolis suite of SDKs provides a variety of starting points for AI application development and deployment.

State-of-the-Art Vision Language Models and Vision Foundation Models

Access a wide range of advanced AI models to build vision AI applications that bring vision and language together to enable interactive visual question-answering. Vision language models (VLMs) are multimodal, generative AI models capable of understanding and processing video, image, and text. Computer vision foundation models, including vision transformers (ViTs) analyze and interpret visual data to create embeddings or perform tasks like object detection, segmentation, and classification.

Explore NVIDIA NIM for Vision

.jpg)

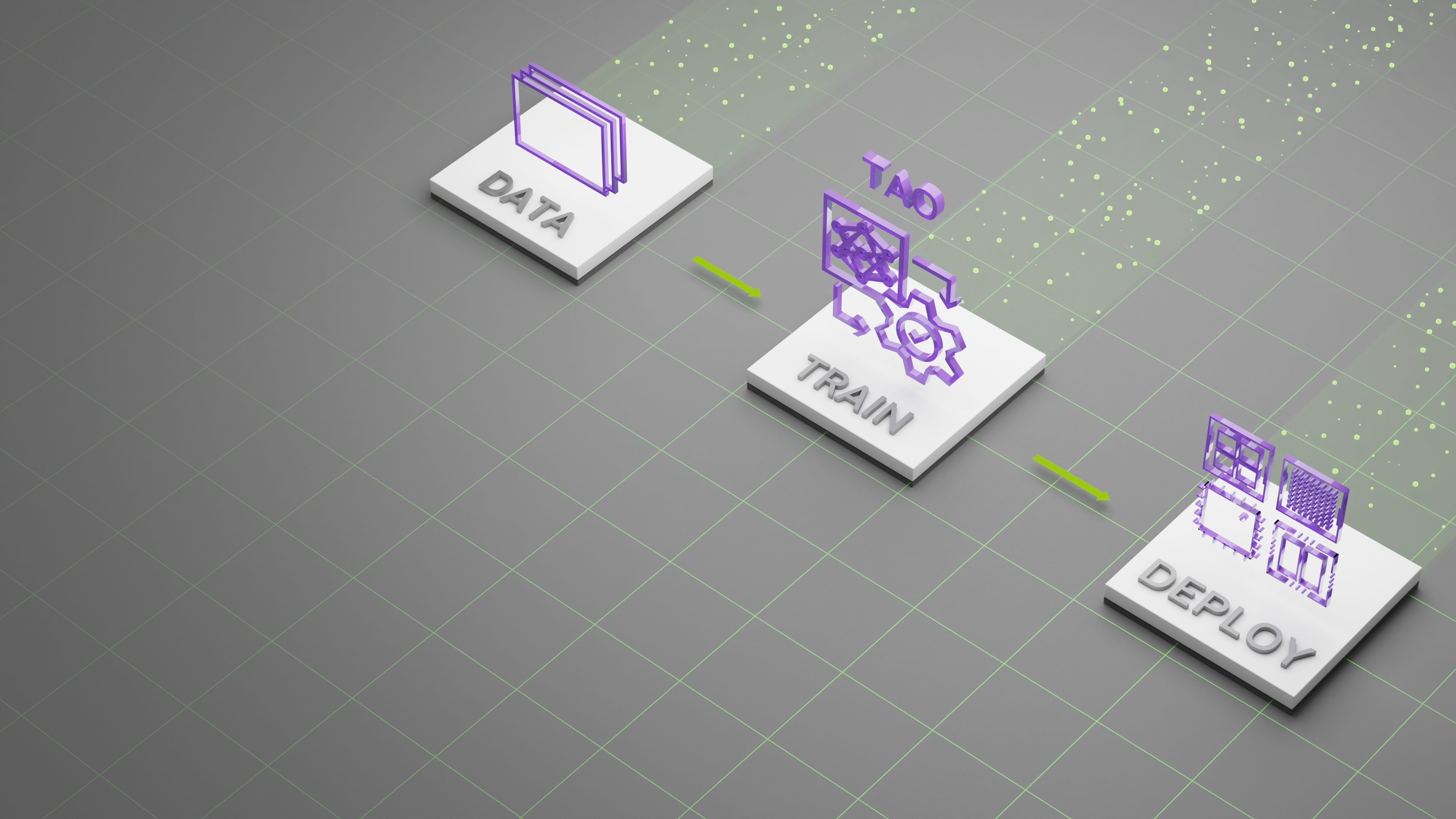

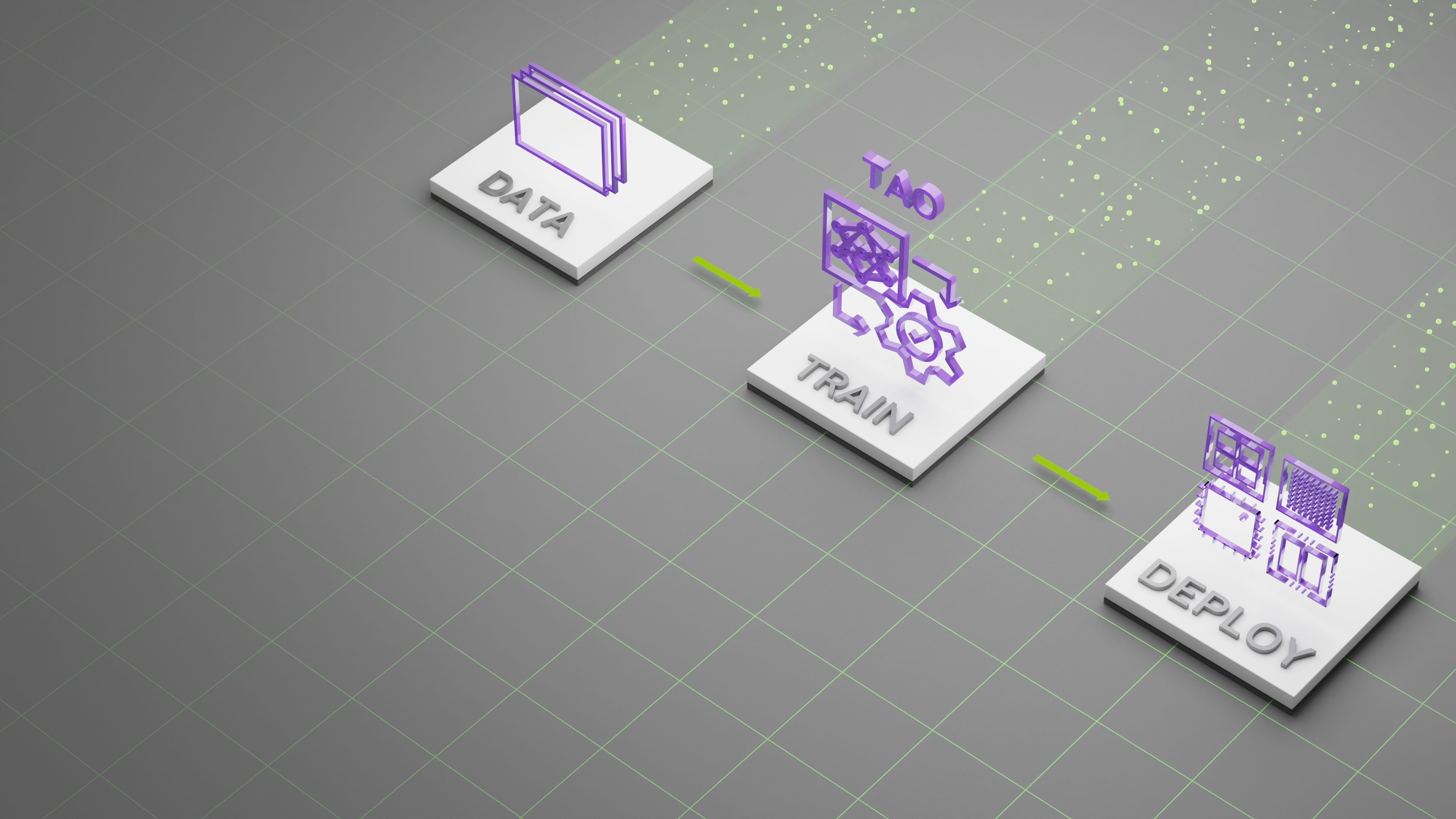

TAO Toolkit

The Train, Adapt, and Optimize (TAO) Toolkit is a low-code AI model development solution that lets you use the power of transfer learning to fine-tune NVIDIA pretrained vision language models and vision foundation models with your own data and optimize for inference—without AI expertise or a large training dataset.

Learn More About TAO Toolkit

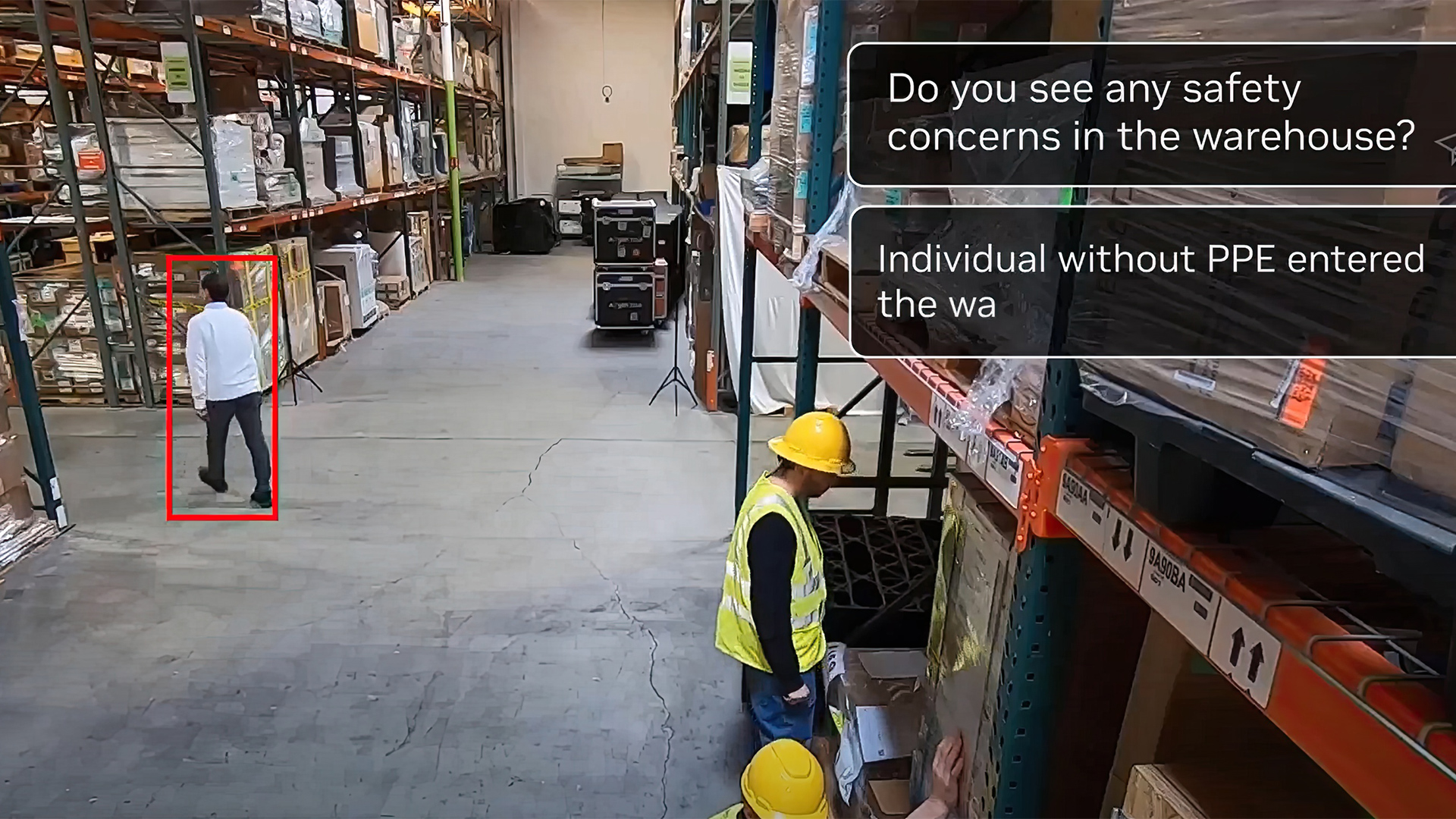

AI Agent Blueprints

The NVIDIA AI Blueprint for video search and summarization (VSS) makes it easy to get started building and customizing video analytics AI agents—all powered by generative AI, vision language models (VLMs), large language models (LLMs), and NVIDIA NIM. The video analytics AI agents are given tasks through natural language and can process vast amounts of video data to provide critical insights that help a range of industries optimize processes, improve safety, and cut costs.

The AI agents built from the blueprint can analyze, interpret, and process video data at scale, producing video summaries up to 200X faster than going through the videos manually. The blueprint can fast-track AI agents development by bringing together various generative AI models and services, and provides a lot of flexibility through a wide range of NVIDIA and 3rd-party VLMs/LLMs, as well as optimized deployments options from edge to cloud.

Explore NVIDIA AI Blueprint for Video Search and Summarization

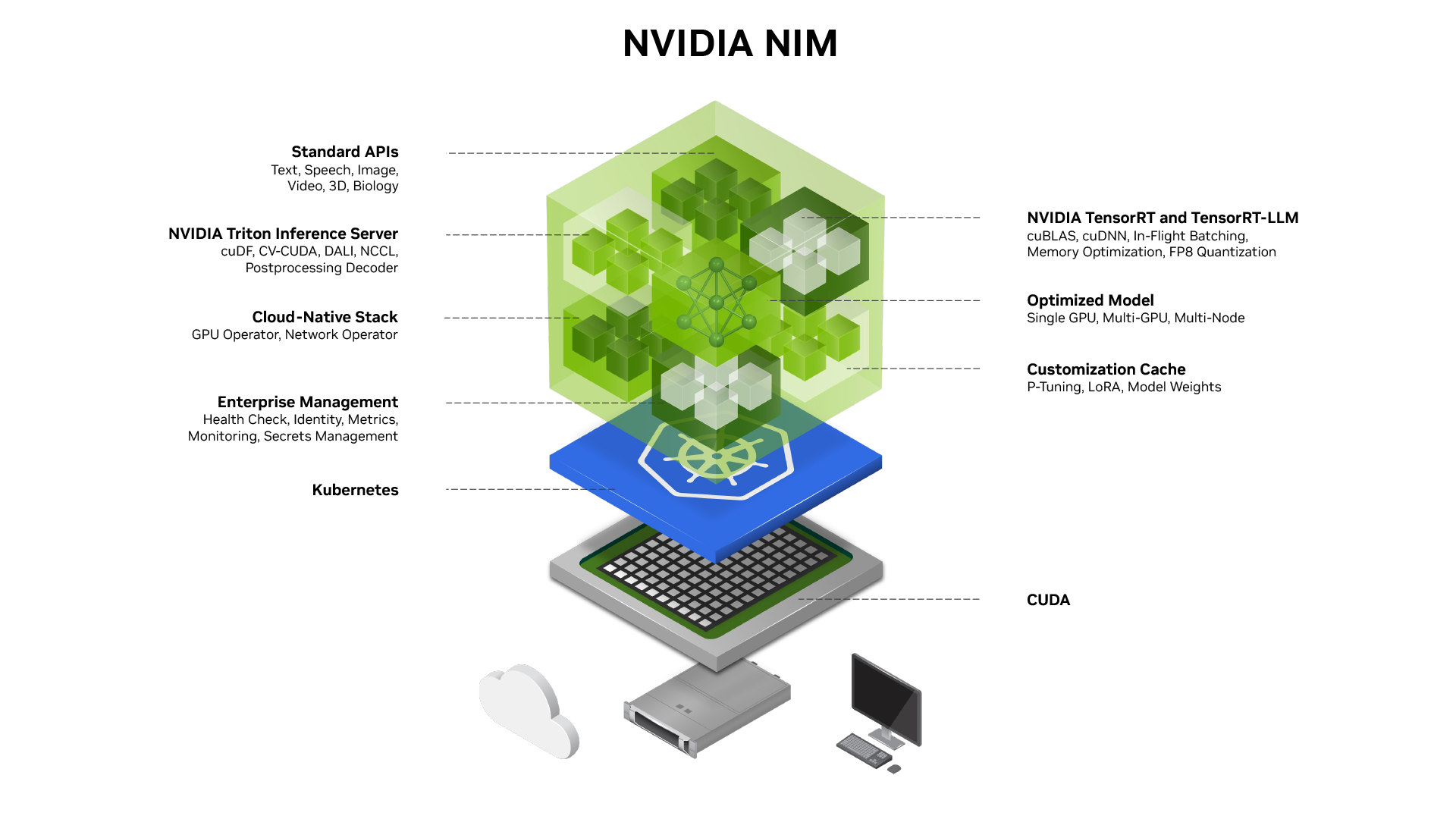

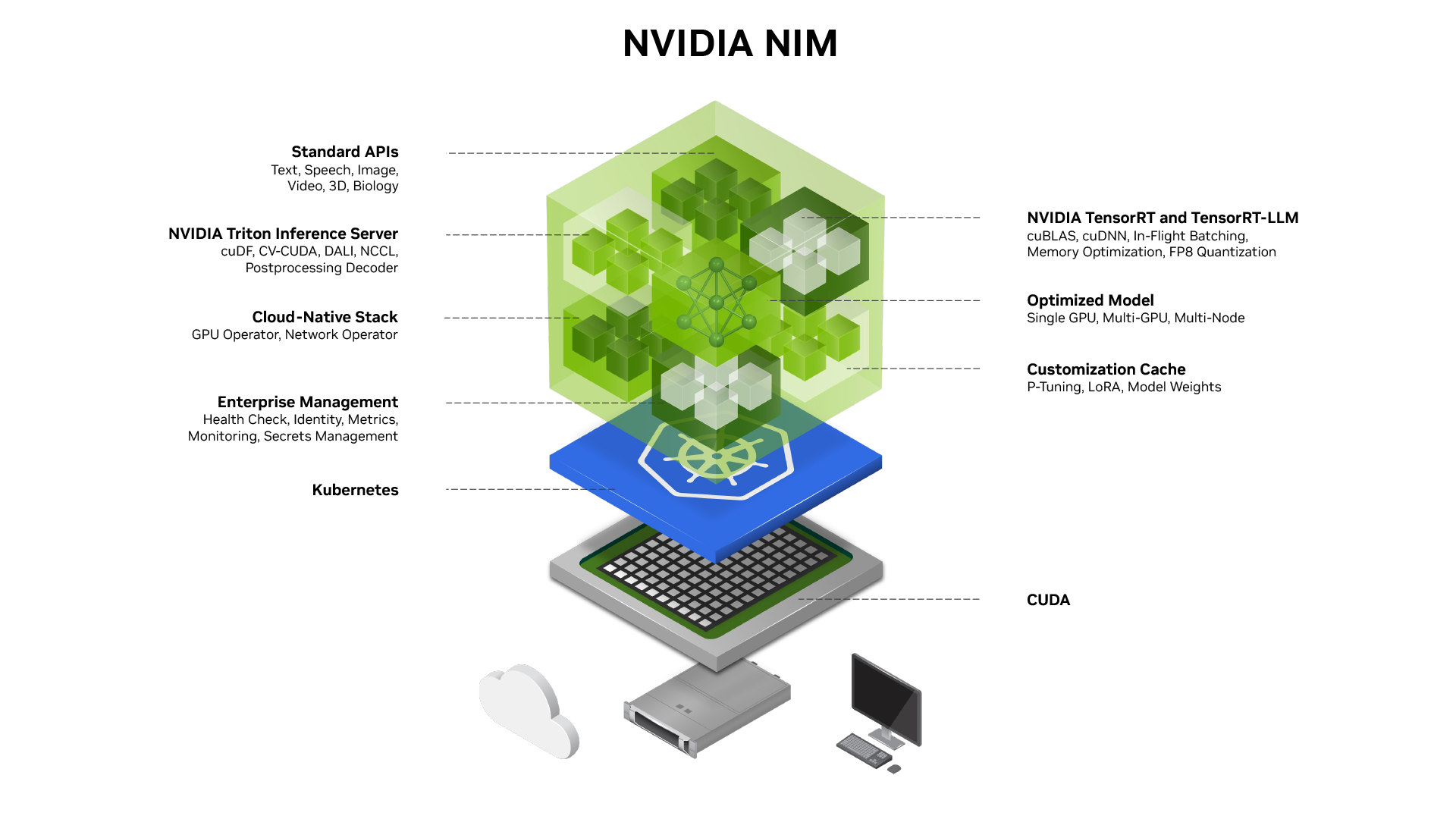

NVIDIA NIM

NVIDIA NIM (NVIDIA Inference Microservices) is a set of easy-to-use microservices designed for secure, reliable deployment of high-performance AI model inferencing across the cloud, data center. and workstations. Supporting a wide range of AI models—including foundation models, LLMs, VLMs, and more—NIM ensures seamless, scalable AI inferencing, on-premises or in the cloud, using industry-standard APIs.

Explore NVIDIA NIM for Vision

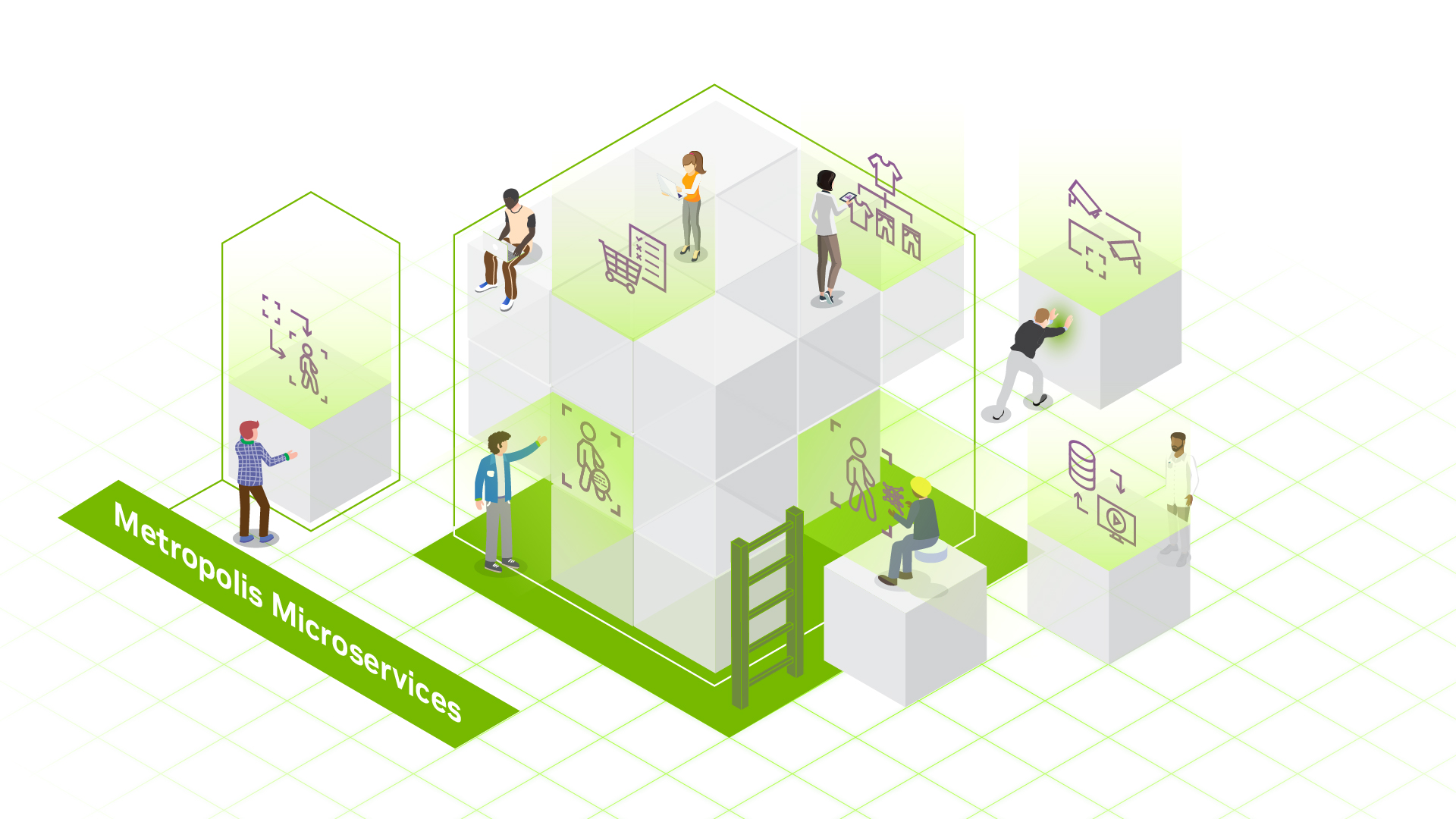

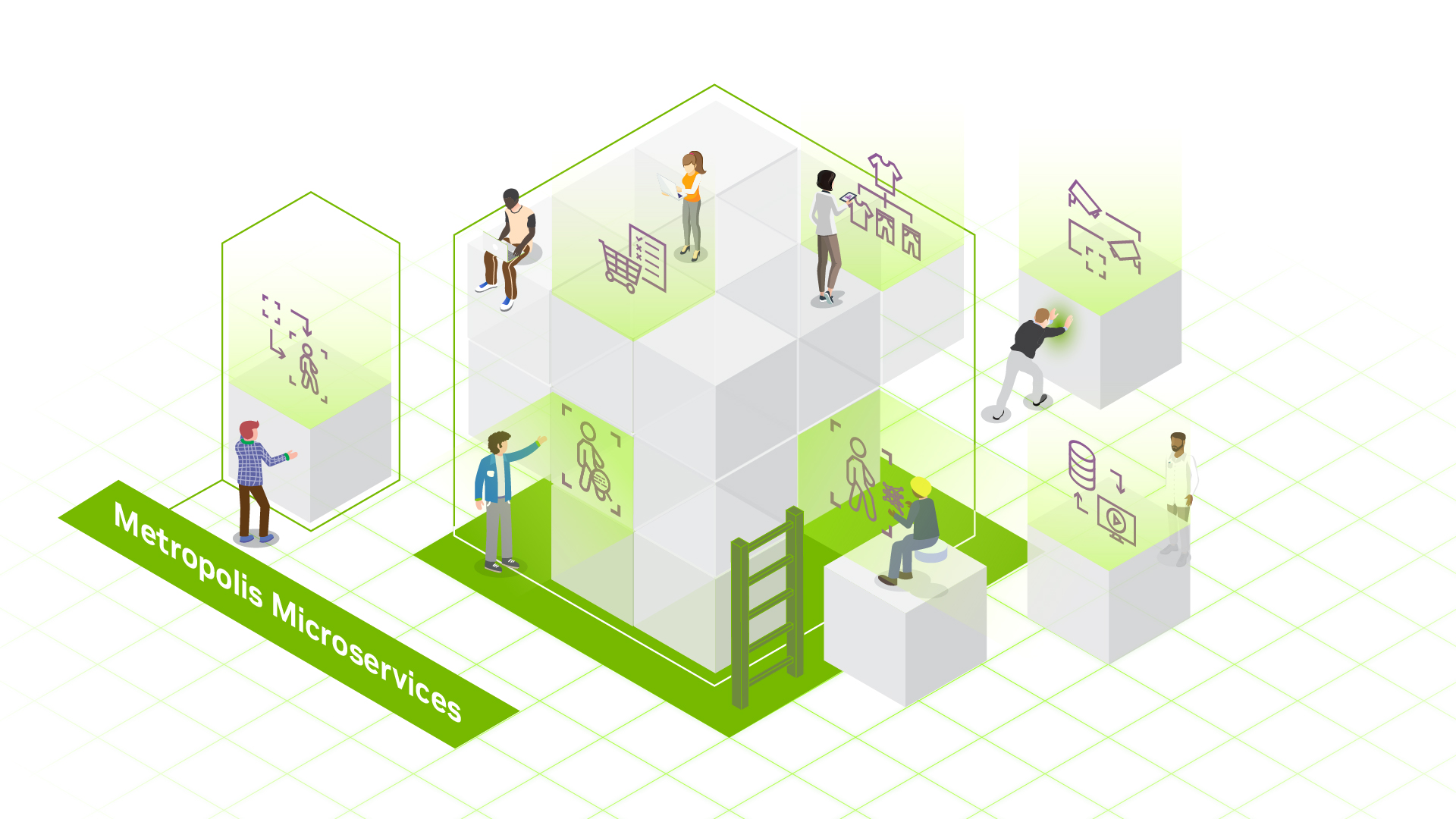

Metropolis Microservices

Metropolis microservices provide powerful, customizable, cloud-native building blocks for developing vision AI applications and solutions. They’re built to run on NVIDIA cloud and data center GPUs, as well as the NVIDIA Jetson Orin? edge AI platform.

Learn More

DeepStream SDK

NVIDIA DeepStream SDK is a complete streaming analytics toolkit based on GStreamer for AI-based multi-sensor processing, video, audio, and image understanding. It’s ideal for vision AI developers, software partners, startups, and OEMs building IVA apps and services.

Learn More About DeepStream SDK

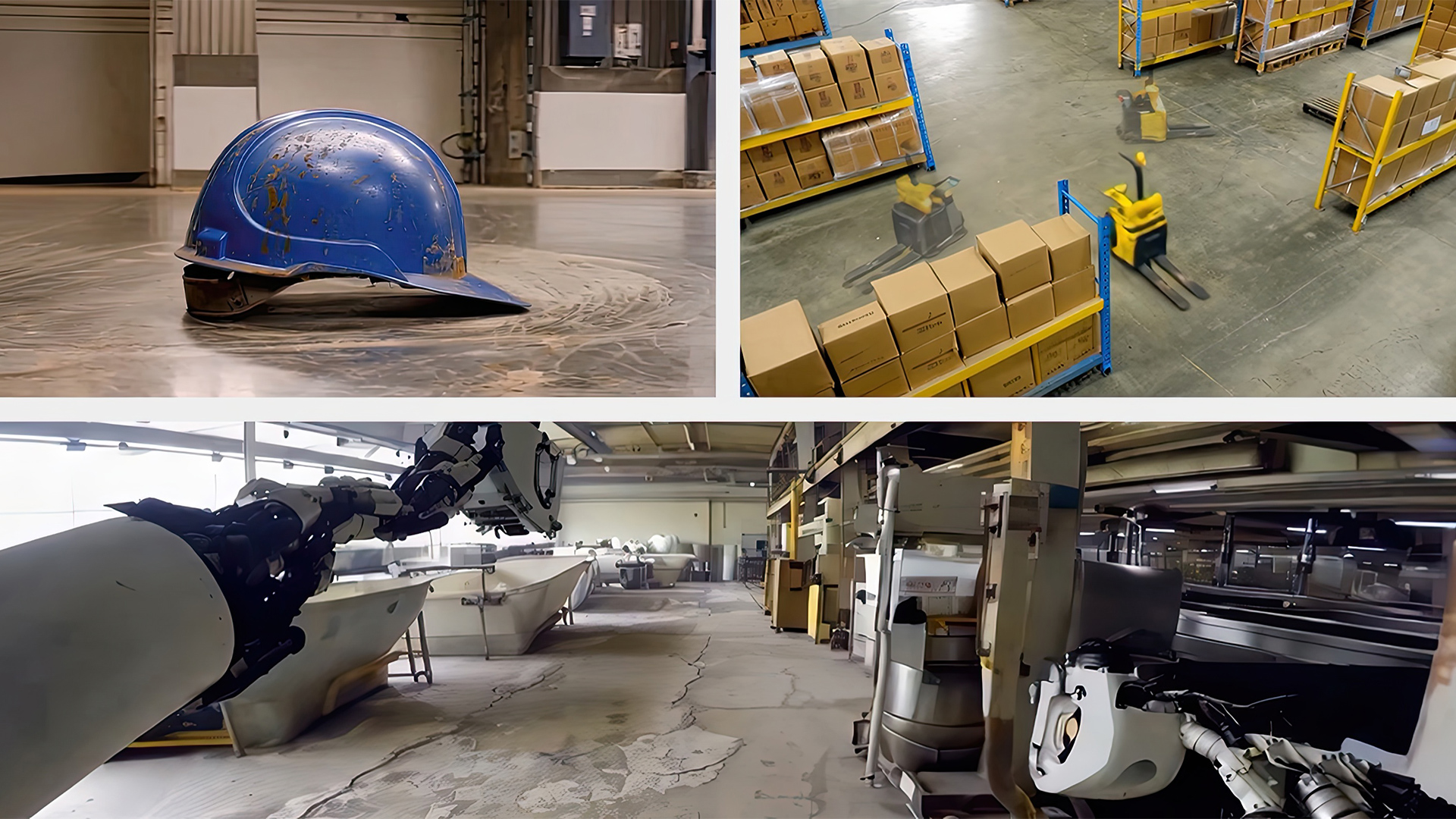

NVIDIA Omniverse

NVIDIA Omniverse? helps you integrate OpenUSD, NVIDIA RTX? rendering technologies, and generative physical AI into existing software tools and simulation workflows to develop and test digital twins. You can use it with your own software for building AI-powered robot brains that drive robots, Metropolis perception from cameras, equipment, and more for continuous development, testing, and optimization.

Omniverse Replicator makes it easier to generate physically accurate 3D synthetic data at scale, or build your own synthetic data tools and frameworks. Bootstrap perception AI model training and achieve accurate Sim2Real performance without having to manually curate and label real-world data.

Learn More About Omniverse Replicator

NVIDIA Cosmos

NVIDIA Cosmos? is a platform of state-of-the-art generative world foundation models (WFMs), advanced tokenizers, guardrails, and an accelerated data processing and curation pipeline—built to accelerate the development of physical AI systems.

Learn More About NVIDIA Cosmos

NVIDIA Physical AI Dataset

Unblock data bottlenecks with this open-source dataset for smart spaces, robot, and autonomous vehicle development. The unified collection is composed of validated data used to build NVIDIA physical AI solutions — now freely available to developers on Hugging Face.

Start Building Today

Use and Fine-Tune Optimized AI Models

State-of-the-Art Vision Language Models and Vision Foundation Models ?

Access a wide range of advanced AI models to build vision AI applications that bring vision and language together to enable interactive visual question-answering. Vision language models (VLMs) are multimodal, generative AI models capable of understanding and processing video, image, and text. Computer vision foundation models, including vision transformers (ViTs) analyze and interpret visual data to create embeddings or perform tasks like object detection, segmentation, and classification.

Explore NVIDIA NIM for Vision

.jpg)

TAO Toolkit

The Train, Adapt, and Optimize (TAO) Toolkit is a low-code AI model development solution that lets you use the power of transfer learning to fine-tune NVIDIA pretrained vision language models and vision foundation models with your own data and optimize for inference—without AI expertise or a large training dataset.

Learn More About TAO Toolkit

Build Powerful AI Applications

AI Agent Blueprints

The NVIDIA AI Blueprint for video search and summarization (VSS) makes it easy to get started building and customizing video analytics AI agents—all powered by generative AI, vision language models (VLMs), large language models (LLMs), and NVIDIA NIM. The video analytics AI agents are given tasks through natural language and can process vast amounts of video data to provide critical insights that help a range of industries optimize processes, improve safety, and cut costs.

The AI agents built from the blueprint can analyze, interpret, and process video data at scale, producing video summaries up to 200X faster than going through the videos manually. The blueprint can fast-track AI agents development by bringing together various generative AI models and services, and provides a lot of flexibility through a wide range of NVIDIA and 3rd-party VLMs/LLMs, as well as optimized deployments options from edge to cloud.

Explore NVIDIA AI Blueprint for Video Search and Summarization

NVIDIA NIM

NVIDIA NIM (NVIDIA Inference Microservices) is a set of easy-to-use microservices designed for secure, reliable deployment of high-performance AI model inferencing across the cloud, data center. and workstations. Supporting a wide range of AI models—including foundation models, LLMs, VLMs, and more—NIM ensures seamless, scalable AI inferencing, on-premises or in the cloud, using industry-standard APIs.

Explore NVIDIA NIM for Vision

Metropolis Microservices

Metropolis microservices provide powerful, customizable, cloud-native building blocks for developing vision AI applications and solutions. They’re built to run on NVIDIA cloud and data center GPUs, as well as the NVIDIA Jetson Orin? edge AI platform.

Learn More

DeepStream SDK

NVIDIA DeepStream SDK is a complete streaming analytics toolkit based on GStreamer for AI-based multi-sensor processing, video, audio, and image understanding. It’s ideal for vision AI developers, software partners, startups, and OEMs building IVA apps and services.

Learn More About DeepStream SDK

Augment Training With Simulation and Synthetic Data

NVIDIA Omniverse

NVIDIA Omniverse? helps you integrate OpenUSD, NVIDIA RTX? rendering technologies, and generative physical AI into existing software tools and simulation workflows to develop and test digital twins. You can use it with your own software for building AI-powered robot brains that drive robots, Metropolis perception from cameras, equipment, and more for continuous development, testing, and optimization.

Omniverse Replicator makes it easier to generate physically accurate 3D synthetic data at scale, or build your own synthetic data tools and frameworks. Bootstrap perception AI model training and achieve accurate Sim2Real performance without having to manually curate and label real-world data.

Learn More About Omniverse Replicator

NVIDIA Cosmos

NVIDIA Cosmos? is a platform of state-of-the-art generative world foundation models (WFMs), advanced tokenizers, guardrails, and an accelerated data processing and curation pipeline—built to accelerate the development of physical AI systems.

Learn More About NVIDIA Cosmos

NVIDIA Physical AI Dataset

Unblock data bottlenecks with this open-source dataset for smart spaces, robot, and autonomous vehicle development. The unified collection is composed of validated data used to build NVIDIA physical AI solutions — now freely available to developers on Hugging Face.

Start Building Today

Developer Resources

Build a Video Search and Summarization Agent

Learn how to seamlessly build an AI agent for long-form video understanding using NVIDIA AI Blueprint for video search and summarization.

VLM Reference Workflows

Check out advanced workflows for building multimodal visual AI agents.

VLM Prompt Guide

Learn how to effectively prompt a VLM for single-image, multi-image, and video-understanding use cases.

Build an Agentic Video Workflow

Learn how to build a workflow with audio input, speech output for video search, and summarization.

View all Metropolis technical blogs

Explore NVIDIA GTC Talks On-Demand

Develop, deploy, and scale AI-enabled video analytics applications with NVIDIA Metropolis.