This is the third post in the large language model latency-throughput benchmarking series, which aims to instruct developers on how to benchmark LLM inference with TensorRT-LLM. See LLM Inference Benchmarking: Fundamental Concepts for background knowledge on common metrics for benchmarking and parameters. And read LLM Inference Benchmarking Guide: NVIDIA GenAI-Perf and NIM for tips on using GenAI��

]]>

This is the third post in the large language model latency-throughput benchmarking series, which aims to instruct developers on how to determine the cost of LLM inference by estimating the total cost of ownership (TCO). See LLM Inference Benchmarking: Fundamental Concepts for background knowledge on common metrics for benchmarking and parameters. See LLM Inference Benchmarking Guide: NVIDIA��

]]>

The previous post, NVIDIA Blackwell Delivers up to 2.6x Higher Performance in MLPerf Training v5.0, explains how the NVIDIA platform delivered the fastest time to train across all seven benchmarks in this latest MLPerf round. This post provides a guide to reproduce the performance of NVIDIA MLPerf v5.0 submissions of Llama 2 70B LoRA fine-tuning and Llama 405B pretraining.

]]>

The journey to create a state-of-the-art large language model (LLM) begins with a process called pretraining. Pretraining a state-of-the-art model is computationally demanding, with popular open-weights models featuring tens to hundreds of billions parameters and trained using trillions of tokens. As model intelligence grows with increasing model parameter count and training dataset size��

]]>

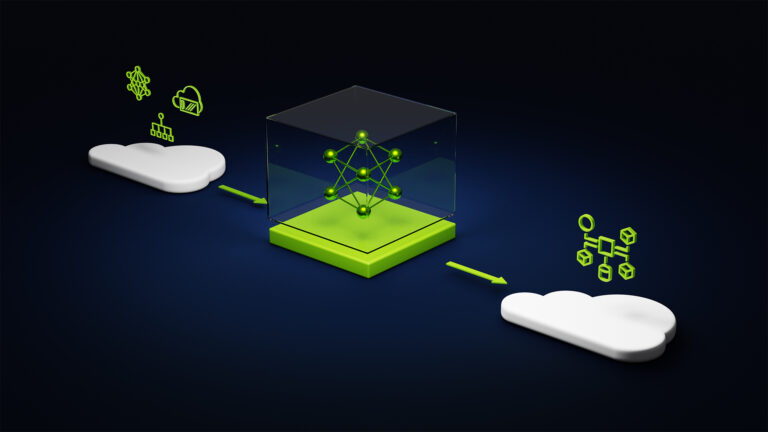

Developers and enterprises training large language models (LLMs) and deploying AI workloads in the cloud have long faced a fundamental challenge: it��s nearly impossible to know in advance if a cloud platform will deliver the performance, reliability, and cost efficiency their applications require. In this context, the difference between theoretical peak performance and actual��

]]>

This is the second post in the LLM Benchmarking series, which shows how to use GenAI-Perf to benchmark the Meta Llama 3 model when deployed with NVIDIA NIM. When building LLM-based applications, it is critical to understand the performance characteristics of these models on a given hardware. This serves multiple purposes: As a client-side LLM-focused benchmarking tool��

]]>

This is the first post in the LLM Benchmarking series, which shows how to use GenAI-Perf to benchmark the Meta Llama 3 model when deployed with NVIDIA NIM. Researchers from the University College London (UCL) Deciding, Acting, and Reasoning with Knowledge (DARK) Lab leverage NVIDIA NIM microservices in their new game-based benchmark suite, Benchmarking Agentic LLM and VLM Reasoning On Games��

]]>

As AI capabilities advance, understanding the impact of hardware and software infrastructure choices on workload performance is crucial for both technical validation and business planning. Organizations need a better way to assess real-world, end-to-end AI workload performance and the total cost of ownership rather than just comparing raw FLOPs or hourly cost per GPU.

]]>

In the rapidly evolving landscape of AI systems and workloads, achieving optimal model training performance extends far beyond chip speed. It requires a comprehensive evaluation of the entire stack, from compute to networking to model framework. Navigating the complexities of AI system performance can be difficult. There are many application changes that you can make��

]]>