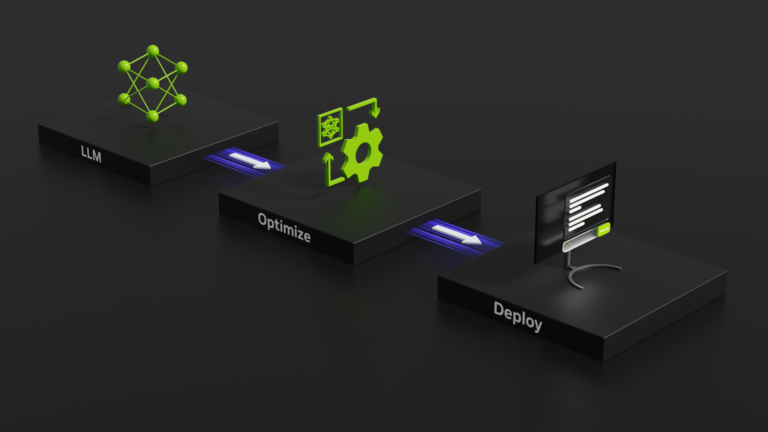

Today, NVIDIA announces the public release of TensorRT-LLM to accelerate and optimize inference performance for the latest LLMs on NVIDIA GPUs. This open-source library is now available for free on the /NVIDIA/TensorRT-LLM GitHub repo and as part of the NVIDIA NeMo framework. Large language models (LLMs) have revolutionized the field of artificial intelligence and created entirely new ways of��

]]>