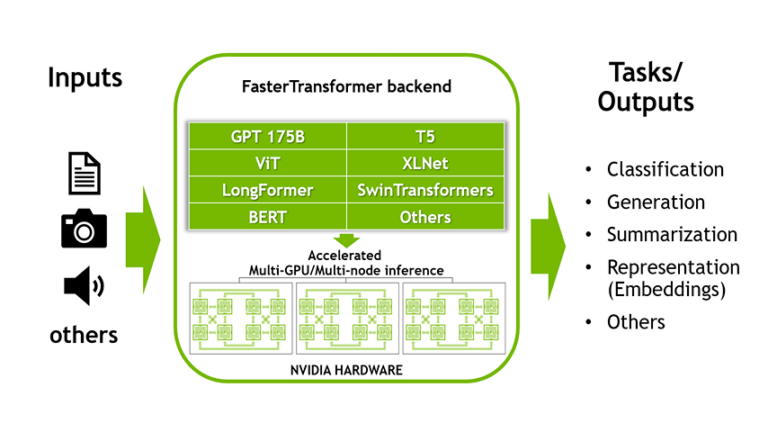

This is the first part of a two-part series discussing the NVIDIA Triton Inference Server��s FasterTransformer (FT) library, one of the fastest libraries for distributed inference of transformers of any size (up to trillions of parameters). It provides an overview of FasterTransformer, including the benefits of using the library. Join the NVIDIA Triton and NVIDIA TensorRT community to stay��

]]>